Earlier this year, Instagram launched a feature that would flag potentially offensive comments before they’re posted. Now, the social media platform is expanding this preemptive flagging system to Instagram’s captions, as well. The new feature will warn users after they’ve written a caption for a feed post that Instagram’s AI detects as being similar to those that have already been reported for bullying.

It will not, however, block users from publishing their hateful remarks. Instead, Instagram says these little nudges simply give users time to reconsider their words — something it found was helping to cut down on the bullying taking place in the comments section after the launch of the earlier feature.

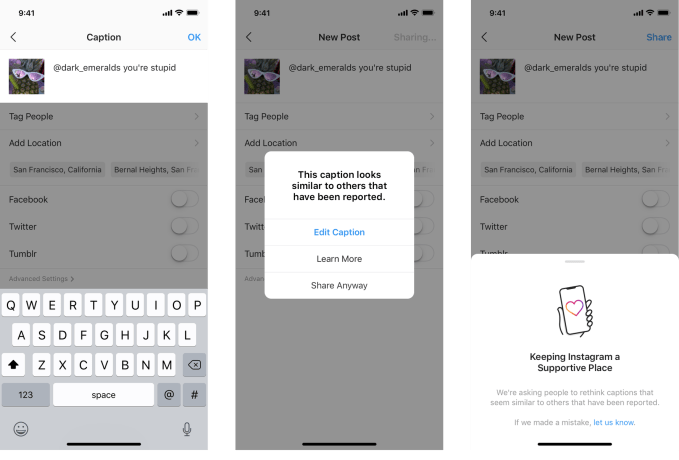

Once live, when users write a caption that appears to be bullying, a notification will appear that says: “This caption looks similar to others that have been reported.” The notification allows users to edit the text, click a button to learn more or share their post anyway.

In addition to helping reduce online bullying, Instagram hopes the feature will also help to educate users about what sort of things aren’t allowed on Instagram and when accounts could be at risk of being disabled for rule violations.

While the addition doesn’t prevent someone from posting their negative or abusive captions, Instagram has rolled out a number of features to aid those impacted by online bullying. For example, users can turn off comments on individual posts, remove followers, filter comments, mute accounts and more. It also this year began downranking borderline content, giving those whose accounts step close to the line — but not enough to be banned — fewer reasons to post violent or hurtful content in search of Instagram fame.

Unfortunately for Instagram’s more than a billion users, these sorts of remediations have arrived years too late. It only today began globally fact checking posts for misinformation.

Like most of today’s social media platforms, Instagram wasn’t thoughtfully designed by those with an eye toward how its service could be abused by those who want to hurt others. In fact, the idea that tech could actually harm others was a later realization for Instagram and others, who are today still struggling to build workforces where a variety of viewpoints and experiences are considered.

While it’s certainly a welcome addition to flag remarks that appear to be bullying, it’s disappointing that such a feature arrived 10 years after Instagram’s founding. And while AI advances may have made such a tool more powerful and useful as it debuts sometime next year, a simpler form of this feature could have existed ages ago, with improvements added over time.

Instagram says the feature is also rolling out slowly, initially in select markets, then globally in the “coming months” — meaning 2020.

Recent Comments